Censorship of Nudity on YouTube Gives All Users the Power to Censor

Update April 2018: Since this article was first published in 2014, YouTube seems to have veered more towards adding an “age restriction” to videos instead of taking them all down (they have been age-restricting content since 2010). Of course this is still censorship, and this system is deeply flawed. It’s not just minors or “restricted mode” enabled users who won’t find the videos, but also anyone who isn’t signed in to a YouTube account. This means the viewership reach of these videos is far less than unrestricted ones.

The type of content that gets age-restricted includes graphic violence, vulgar language, nudity and “sexually suggestive” material, and videos depicting dangerous activities. So while I’d agree with filtering pornography or disturbing violence for minors, I don’t agree with suppressing educational sex-ed or health videos, or naturist videos with simple nudity. (Teenagers especially need access to quality sex-ed online!) From my own experience using the site, it definitely doesn’t take much to get the restricted label on a video with nudity. It can happen with videos that show body painting or even just female nipples in a non-sexual context.

The way YouTube applies their content restrictions is the same way they’ve operated with video removal — they rely on users to flag videos. This means that whether your content will get flagged first depends on which users see it and how they feel about it. If flagged, it’s supposed to go to YouTube’s review team, where one person will make a snap decision on your video. It’s the same issue of guidelines being haphazardly applied.

Last year the media giant came under fire for age-restricting LGBT content, including non-sexual music videos and a Human Rights Watch video about a discriminatory anti-gay law in Utah. YT responded, “We recognize that some videos are incorrectly labelled by our automated system and we realize it’s very important to get this right.” This suggests it’s not just humans flagging and labeling videos. And just how many videos wind up getting labeled “incorrectly” or mistakenly removed? …A LOT. I’d guess it’s thousands upon thousands each month.

All of this isn’t to say that YouTube has ceased removing videos because they’re definitely still doing that, too, based on the same vague guidelines. Just look at Cleo of ToplessTopics who created videos to advocate for topfree equality. She posted videos just of herself topfree and discussing topfreedom issues in an educational context, and YouTube repeatedly removed them, restricted her account, etc. (She since gave up posting on the site.)

In 2016, YouTube also drew the ire of users when they started demonetizing videos that weren’t “advertiser friendly.” So ads could not be displayed on a video with graphic violence or nudity. But a YouTuber didn’t even have to show scenes of violence or war or nudism to make this happen – all they had to do was talk about it in their video! Some popular YouTubers publicly stated that they would not be censoring themselves in order to keep monetizing all of their videos. The problem is, other users DEFINITELY have and will in order to make money on whatever they create.

Where else can we post videos to share with the world? Vimeo is still the go-to video platform alternative, but it’s a much smaller site with 170 million users. This is less than 20% of YouTube’s user base of 1 billion. YouTube is also owned by Google so of course its videos are favored in Google search results. Nevertheless I use Vimeo and encourage others to post there instead of (or in addition to) YT.

That concludes my update. Read about my previous experiences with YouTube censorship below!

————-

A little over a week ago, I went into our YouTube channel with plans to upload a new video. But before I could do so, I was slapped with a warning. Our short naturist promo video was reported by a user, reviewed by a YouTube admin (I guess) and taken down for violating the Terms of Use. It had been up for 3 years and had over 200,000 views.

The offending video:

YouTube has become so big, they’ve essentially lost control over their content. With thousands of videos uploaded daily, it becomes impossible to find, review and remove every single delinquent video. So what’s their solution?

YouTube now relies on users to report videos that violate their terms of use. In other words, users now have the power to decide what is and isn’t too obscene for YouTube. It doesn’t matter if the video has been on the site for years and accumulated hundreds of thousands or even millions of views. It doesn’t even matter if the report is reviewed by a YouTube admin, who presumably makes the final decision on leaving it up or removing it. Because, were it not for that one user who reported the video, it would still be sitting on YouTube gaining more views.

All it takes is one offended viewer and a few clicks, and the video is gone.

In a video on how to censor flag content, YouTube actually explains how the users are now responsible for helping to monitor content. Though they refer people to their (vague and practically useless) community guidelines, in this video they state, “That’s why we rely on our community of over 280 million people to help flag content they believe is inappropriate. The YouTube flag is the most important tool for telling us about content you think should not appear on YouTube.”

(They have a new, shorter version of this how-to video, but I find the old one is inadvertently more honest.)

So YouTube is basically like, “well our site is so vast, we’re just going to hand off this monitoring responsibility thing to our 280 million users!” Very sneaky, YouTube! In the mind of the user, YouTube would now seem much less accountable for what appears on the site. It also empowers users to act on inappropriate content, gives them a sense of duty to help monitor content and gives people a simple button to click when they see something that offends them (whether it violates the terms of use or not).

So we’re supposed to believe that 280 million people, and YouTube reviewers, are capable of evenly applying some vague community guidelines to report inappropriate content. Or if not the guidelines, they can just report content based on what they believe. Solid plan, right? What could possibly go wrong?

So what happens if a video was truly unjustly removed? In the case of our video, I couldn’t find any way to appeal it. It’s like they just took that option away, and it was a done deal. So we’re stuck with a 6-month strike, whether it was justified or not.

A few months ago, I’d created a parody “Facebook Look Back” video to make a point about Facebook censorship. Ironically, it got reported and censored on YouTube. I was able to appeal it once, but my appeal was rejected. This is why it’s so funny that they state “we encourage free speech” in the flagging video above.

This censorship is absolutely ridiculous. I thought Facebook was the evil empire of Internet censorship, but Google (Google owns YouTube) is worse.

Why was our video removed? It violated their policy on nudity and sex. I can only assume the offending part was the two uncovered female breasts.

Here’s what they say in their Community Guidelines on “Sex and Nudity”:

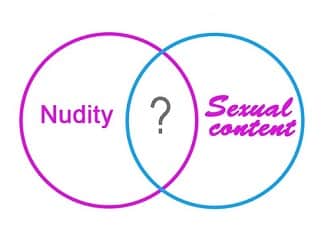

“Most nudity is not allowed, particularly if it is in a sexual context. Generally if a video is intended to be sexually provocative, it is less likely to be acceptable for YouTube. There are exceptions for some educational, documentary, scientific, and artistic content, but only if that is the sole purpose of the video and it is not gratuitously graphic. For example, a documentary on breast cancer would be appropriate, but posting clips out of context from the documentary might not be.”

This policy is vague and inherently subjective. There are no concrete guidelines. “Art” is always subjective. What is “nudity” exactly? What is art? What qualifies something as educational?

YouTube didn’t always have the policies it has today. At one time, nudity wasn’t even allowed on the site. Period. But I’m sure they realized no nudity meant censoring millions of works of art. So in 2010 they changed their policy to allowing nudity in the context of art. There’s just one problem. Who decides what’s art and what’s not?

Having no specific guidelines means every user is at the mercy of every other user and YouTube admin. The censorship becomes completely arbitrary and inconsistent. Uploading a video with any sort of taboo content is like a gamble. Maybe it’ll stay up, maybe not. Maybe two years or five years will go by before it’s taken down. Who knows.

Judging by the amount of pornography on YouTube right now, the system clearly isn’t working. There are tons of porn videos. TONS.

The same “evolution” has occurred with Facebook, which now claims that content only comes to their attention when it’s reported by a user. So this also creates a system where the censorship is totally random. Sometimes content is left alone, and sometimes it’s taken down. It doesn’t matter whether a post or photo or video actually violates the community standards or not. Facebook has repeatedly stated that breastfeeding photos are allowed, and yet these types of photos continuously get removed.

When they get called out for it in the media, their response is like, “We’re sorry. This almost NEVER happens. There’s just SO much content on our site, and it’s so darn hard to manage! If we fixed it, how would we find the time to develop our elaborate advertising schemes and violate users’ privacy without them knowing about it?”

I understand, Facebook. Technology is hard. It’s hard for Google, too. Lucky for you guys, nobody has successfully taken a stand in a big way and forced you to rewrite all the rules. But eventually, the time will come when people with more influence than us will do something about this.

The current system is shit, and even Google knows that. My solution for them is to give up trying censor the most inane content. It’s a losing battle. The best thing to do is work on taking down illegal material and let everything else be.

So for now, #boycottyoutube. We’re still going to put videos on YouTube, but they’ll be in a style similar to my censored Facebook Look Back video. We’ll use their own website to make a point and drive users to Vimeo.

One last note ’cause I know what some of you are thinking – “But YouTube is a free service. There are alternatives, and you don’t have to use it.”

1. Google is a massive empire. Where do you take your searches? Do you Bing that shit? No, you Google it. Where do you go first to find a video clip? Google might suck, but it dominates the Internet. Telling someone to just leave is like telling them to go do their searches on Yahoo! from now on. You’re not going to get the same results.

2. It’s not really free. You pay with your eyeballs on the advertisements. And no doubt, as long as you’re signed in, Google is tracking your every move and figuring out how to monetize that information. Google is not your friend.

YouTube Nudity Censorship Is Out of Control was published by – Felicity’s Blog